About me

I'm a 4th year PhD student in UW CSE, advised by Prof. Jon Froehlich at the Makeability Lab.

My research interest lies in the intersection of Human-Computer Interaction (HCI), Applied Computer Vision, and LLM Agents. I’m currently pursuing two distinct but connected HCI+AI research directions:

First, I creatively involve SOTA technology to build new AI-assisted design tools, e.g. using generative model to create AR sound effects, and using MLLM and monocular depth estimation to create 2.5D visual effects.

Second, I am building next-generation indoor mapping and accessibility assessment tools with RGB and LiDAR data.

Recent Experiences

-

Apple AIML, Seattle

Machine Learning Intern. Apr 2025-Sep 2025

-

Adobe Research, San Francisco

Research Scientist Intern. Jun 2024-Sep 2024

-

Adobe Research, San Jose

Research Scientist Intern. Jun 2023-Sep 2023

-

Microsofy Research Asia, Beijing

Research Intern. Jun 2020-Mar 2021

Education

-

University of Washington

PhD of Computer Science & Engineering, 2021-2026 (est)

-

University of Washington

Master of Computer Science & Engineering, 2021-2024

-

Tsinghua University

Master of Engineering, 2018-2021

-

Tsinghua University

Bachelor of Architecture, 2014-2018

Selected Publications

-

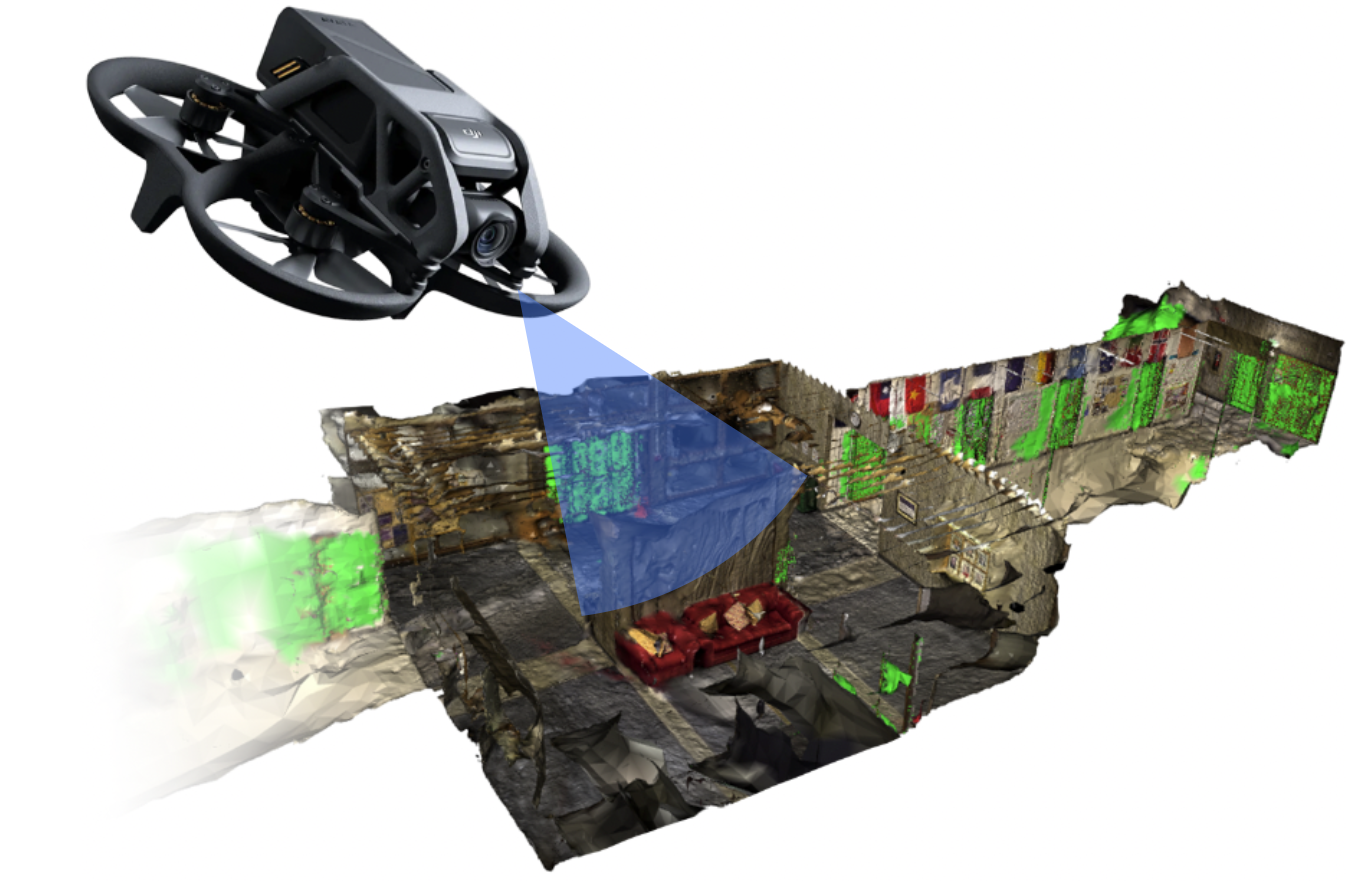

A Demo of DIAM: Drone-based Indoor Accessibility Mapping

UIST'24 Demo

-

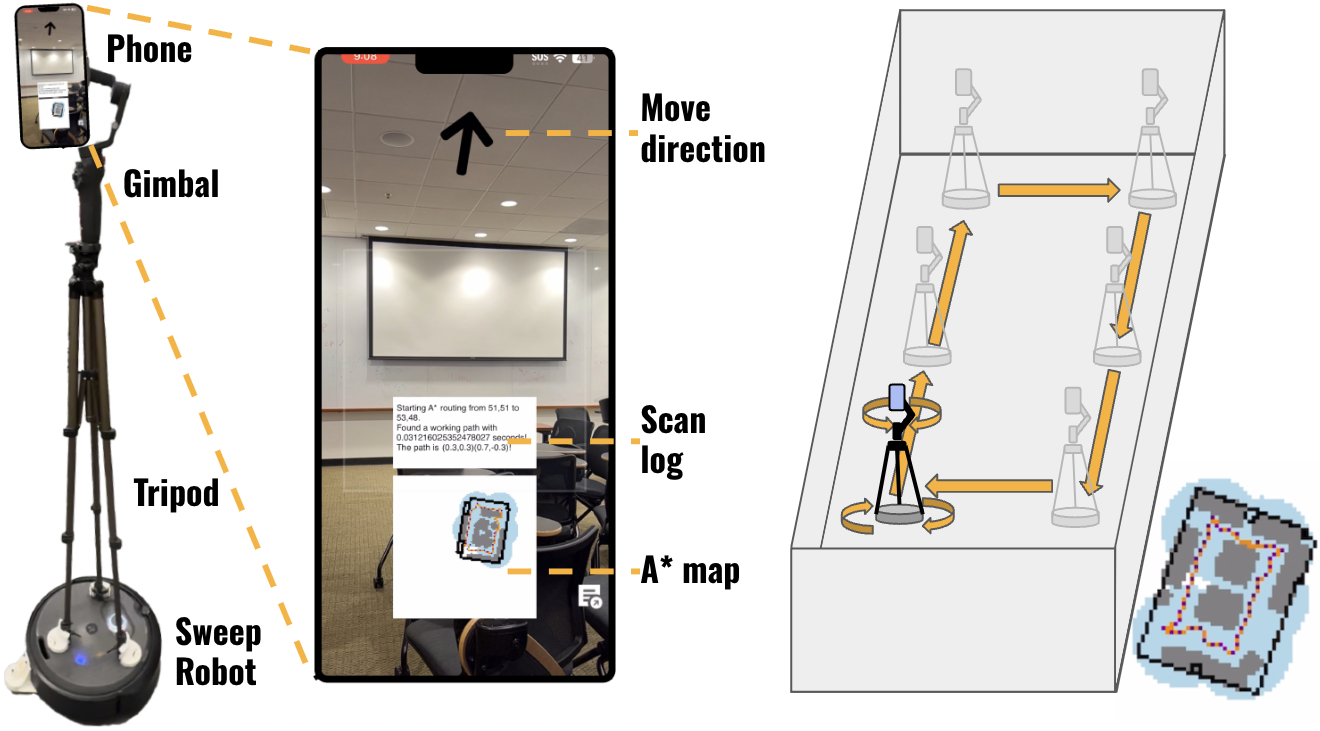

RAIS: Towards A Robotic Mapping and Assessment Tool for Indoor Accessibility Using Commodity Hardware

ASSETS'24 Poster

-

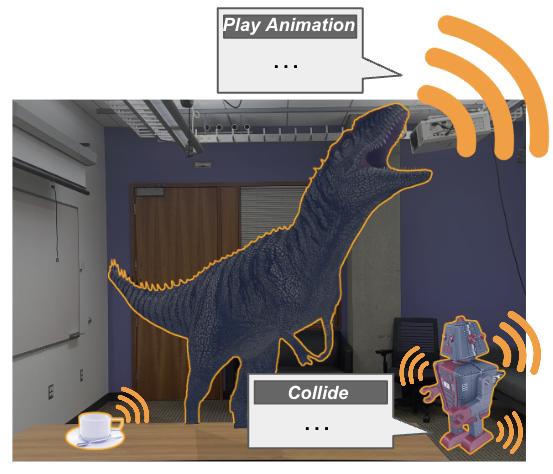

SonifyAR: Context-Aware Sound Generation in Augmented Reality

UIST'24

-

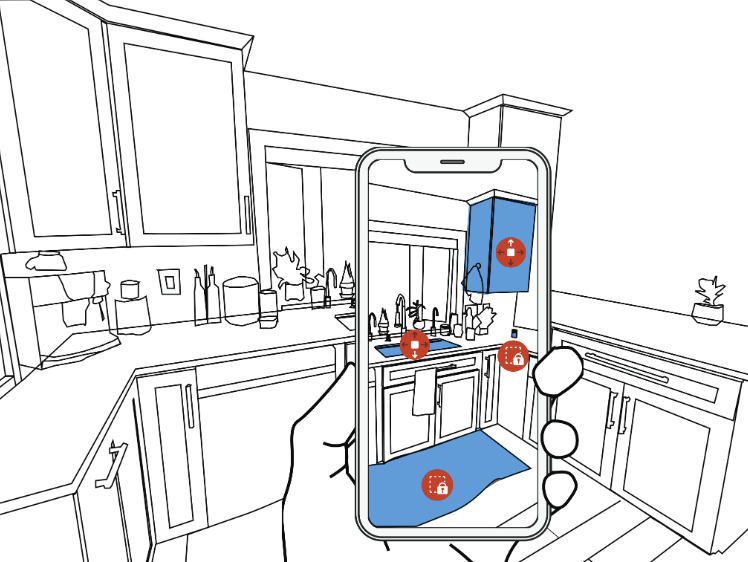

RASSAR: Room Accessibility and Safety Scanning in Augmented Reality

CHI'24

-

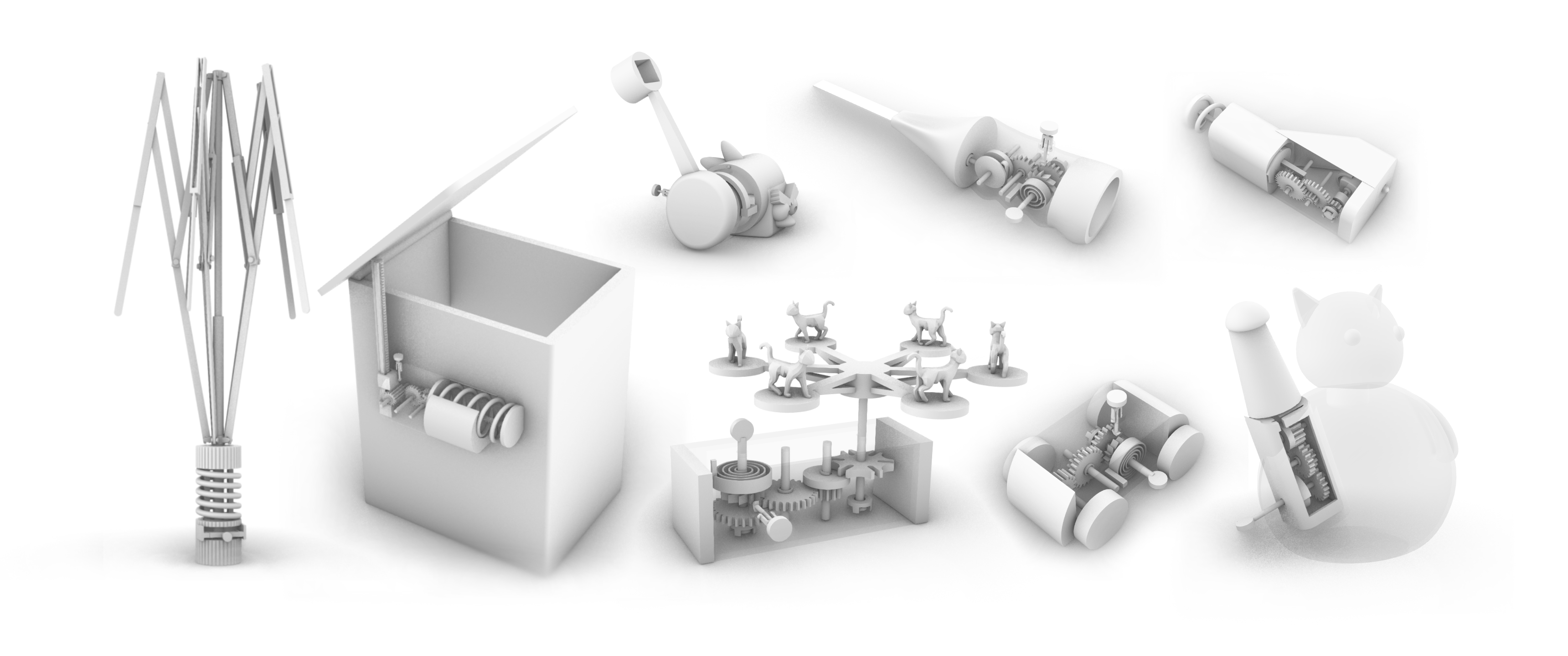

Kinergy: Creating 3D Printable Motion using Embedded Kinetic Energy

UIST'22

-

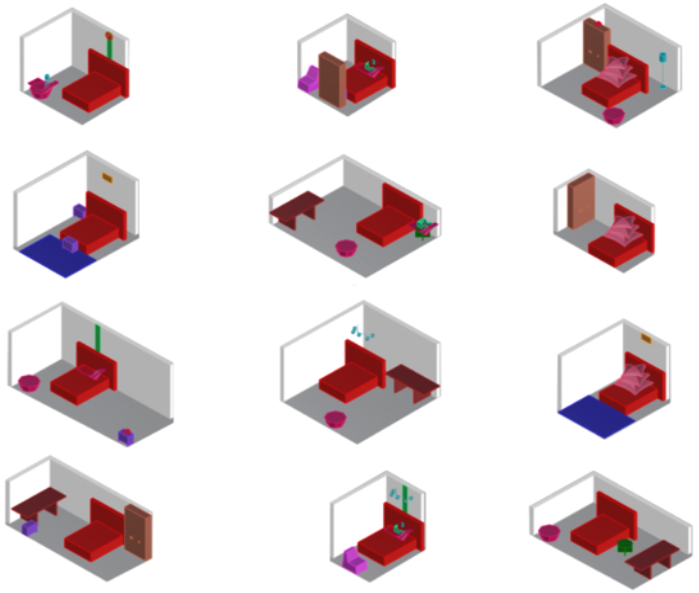

Interior Layout Generation Based on Scene Graph and Graph Generation Model

Design Computing and Cognition’20

-

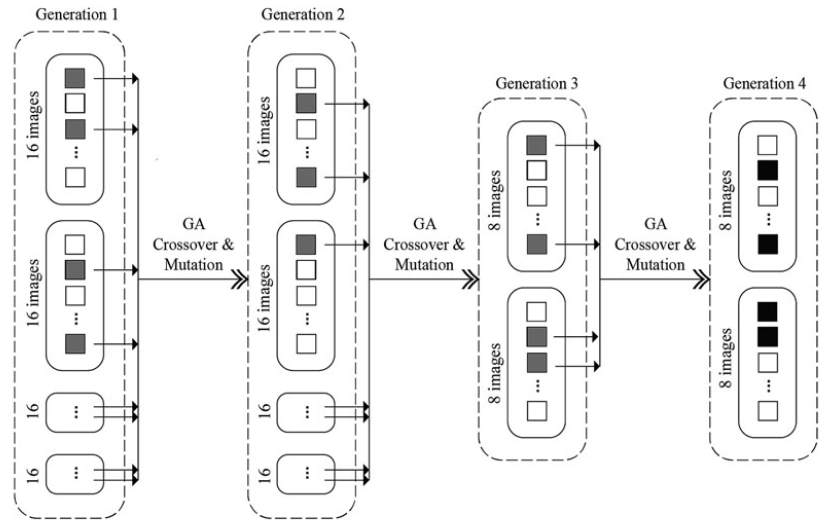

Category, process, and recommendation of design in an interactive evolutionary computation interior design experiment: a data-driven study

AI EDAM 34, 2